It is funny because whenever I ask AI to write powershell scripts they never work

Ah yes, the bourgeoisie really know their game if they let young adults unemployed in masse for a while. Not at all linked to social transformations.

"We had been working with their AI tool for a while, and it was absolutely not at the point of being capable of writing lessons without humans.”

Lol, LMAO this is gonna be a disaster once the bubble crashes and current models undergo recursive degradation.

I do think LLMs are going to start getting worse once more data is fed into them. And then instead of admitting this. These companies will have so much capital they will just tune the AI to say exactly what they want it to every time. We already see some of this but it will get worse.

Reject LLMs branded as AI, retvrn to 9999999 nested if statements.

LLMs are going to start getting worse once more data is fed into them

I’ve been hearing this for a while, has it started happening yet?

And if it did, is there any reason why people couldn’t switch back to an older version?

I knew the answer was “Yes” but it took me a fuckin while to find the actual sources again

https://arxiv.org/pdf/2307.01850 https://www.nature.com/articles/s41586-024-07566-y

the term is “Model collapse” or “model autophagy disorder” and any generative model is susceptible to it

as to why it has not happened too much yet: Curated datasets of human generated content with minimal AI content If it does: You could switch to an older version, yes, but to train new models with any new information past a certain point you would need to update the dataset while (ideally) introducing as little AI content as possible, which I think is becoming intractable with the widespread deployment of generative models.

The witting or unwitting use of synthetic data to train generative models departs from standard AI training practice in one important respect: repeating this process for generation after generation of models forms an autophagous (“self-consuming”) loop. As Figure 3 details, different autophagous loop variations arise depending on how existing real and synthetic data are combined into future training sets. Additional variations arise depending on how the synthetic data is generated. For instance, practitioners or algorithms will often introduce a sampling bias by manually “cherry picking” synthesized data to trade off perceptual quality (i.e., the images/texts “look/sound good”) vs. diversity (i.e., many different “types” of images/texts are generated). The informal concepts of quality and diversity are closely related to the statistical metrics of precision and recall, respectively [39 ]. If synthetic data, biased or not, is already in our training datasets today, then autophagous loops are all but inevitable in the future.

Sometimes yeah you can see it. Not only with updates but within a conversation, Models degrade in effectiveness long before context window is reached. Things like image generation tend to get worse after >2 edits and even if the image seed is given .

The US climate getting increasingly hotter with younger, alienated men becoming increasingly jobless. Looks like you’re speed running for the Guatemalan experience. Hope you enjoy the increase in violent crime.

Trumps telling them that it’s the illegal immigrant’s fault, so that should make it better.

Can we stop talking about Duolingo? I know why all these online writers talk about, it because tech employees thought they found one of those cushy jobs for life and now the rug is being pulled out and it’s example A. However, automation taking over jobs at ports is a much more large scale issue and is an example of how automation will infiltrate every sector not only programmers.

But I just read an article from techbro.mag.richbootlickers.org that said I was only imagining this and AI will make a perfect utopia for everyone forever!

Mr Merchant, please lead the luddite uprising against the chat bots

When the AI bubble pops, it will take the US with it.

Good.

Unfortunately I don’t expect Americans to realize that C suite jobs can just as easily be replaced by AI. We better keep on anthropomorphizing this shit and not treating it like a tool!

C-suite jobs are the jobs most easily replaced by AI, and arguably the only class of job that current AI models are capable of performing adequately.

They’re task-minimal jobs that don’t require any real understanding of anything. Basic logical decision-making and long-term analysis can outperform the typically self-centered and petty motivations most C-suites apply to make decisions that maximize present-quarter profits over everything else.

Jira can already be programmed to annoy me about open issues that have not had an update in a couple days. Program it to message me at inconvenient times asking me stupid questions about the project that I’ve been working on for 2 years, program it to deny me a raise, and you could replace half of the middle managers at my company.

Learn to

code!farm!I can’t wait for the next AI winter.

This AI shit is going to deskill us back to the stone age LOL. Real subsumption of thought.

subsumption of thought into models that dont think

the ceo of the company I work at was just complaining to me and my colleague earlier today that his software engineers aren’t using enough AI to do their work…

The Butlerian Jihad, also known as the Great Revolt as well as commonly shortened to the Jihad, was the crusade against computers, thinking machines, and conscious robots.

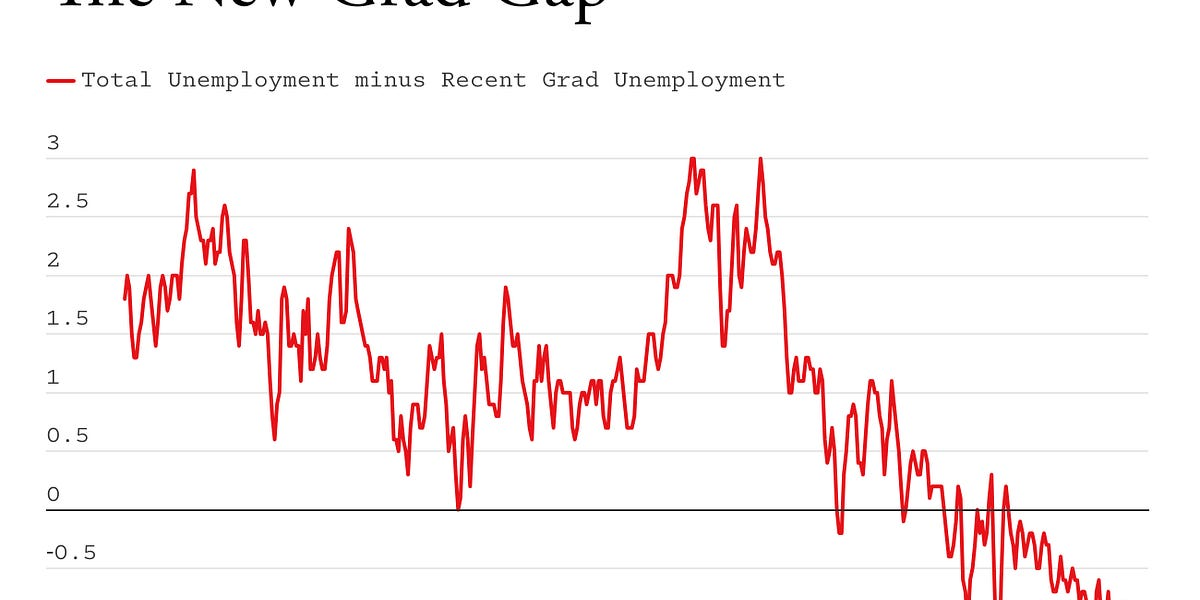

I’m skeptical given the downward trend on that graph started well before winter 2022 (when ChatGPT first came out), and even before covid.

We never recovered from the crash of 2008.

Chad Anki > Virgin Duolingo

I don’t like it.